Nicole Torrico

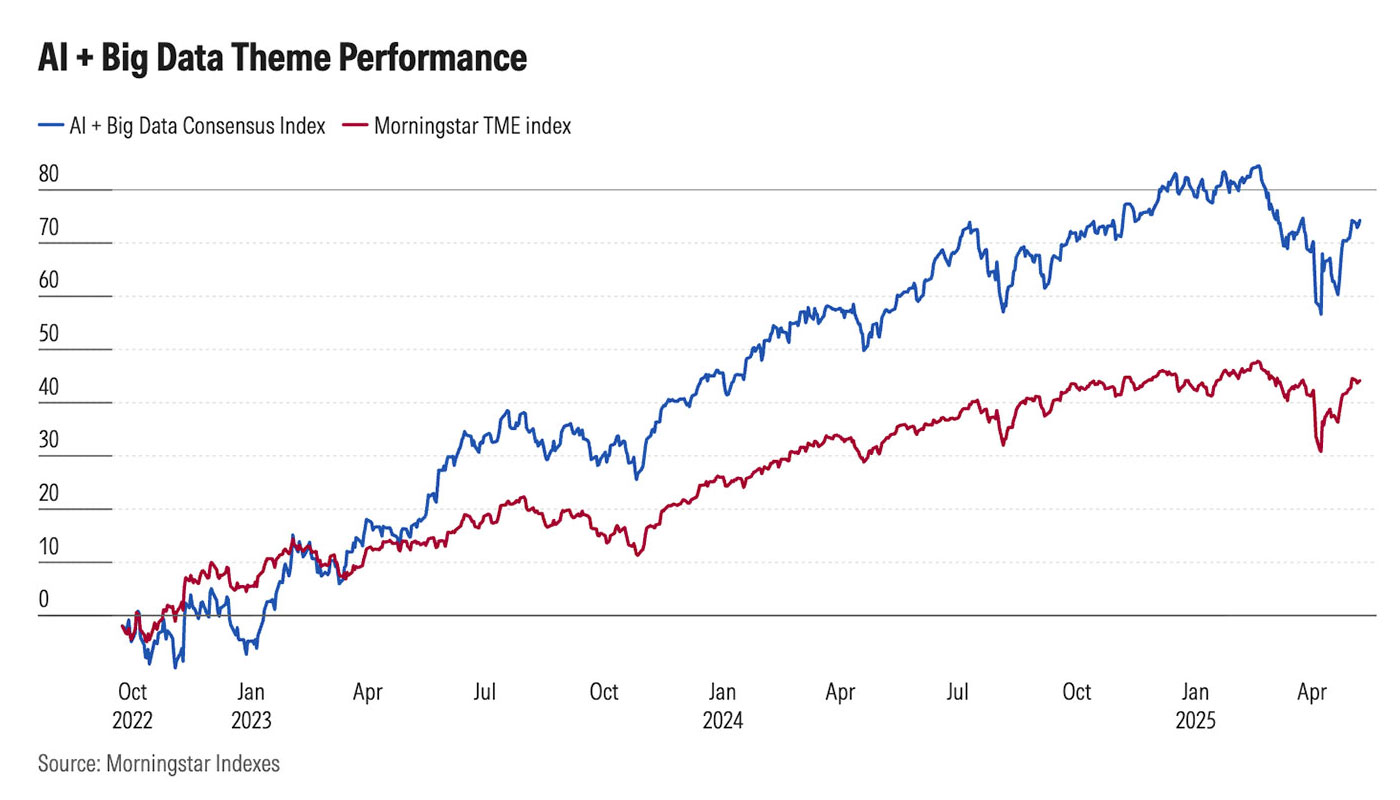

Artificial intelligence presents both significant risks and extraordinary opportunities for institutional investors. Investments in AI and big data companies have dramatically outpaced broader market returns (See Figure 1), underscoring why endowments and foundations need to be paying close attention to the potential investment opportunities within this rapidly growing sector.

At the same time, the risks associated with AI investments are numerous, including risks related to human rights (privacy, surveillance, algorithmic bias); democracy (misinformation, electoral integrity); youth well-being (digital wellness, educational and critical thinking outcomes); labor and workforce impacts (automation, job displacement); and environmental impact (energy and water use from data centers and device usage).

Figure 1: AI + Big Data Theme Performance (2022 to 2025)

Understanding these opportunities and risks is essential, yet it seems every discussion about AI reveals just how much more there is to explore. Over the past year, the Intentional Endowments Network has begun supporting endowments and foundations in evaluating AI in their portfolios. As the network has explored these critical issues, we have identified key resources that provide effective frameworks for developing a shared understanding of the risks and opportunities facing intentional investors in the age of AI.

There are several material factors to consider, not just in relation to portfolio companies with dedicated AI strategies, but also across sectors where AI is used in the context of broader work (i.e. automation, hiring and talent management, financial services risk assessment, etc.). There are multiple taxonomies for understanding these risk factors, including those provided by the Institutional Limited Partners Association, Reframe Venture (formerly Venture ESG) and the World Economic Forum.

Naming, Identifying and Mitigating Risks

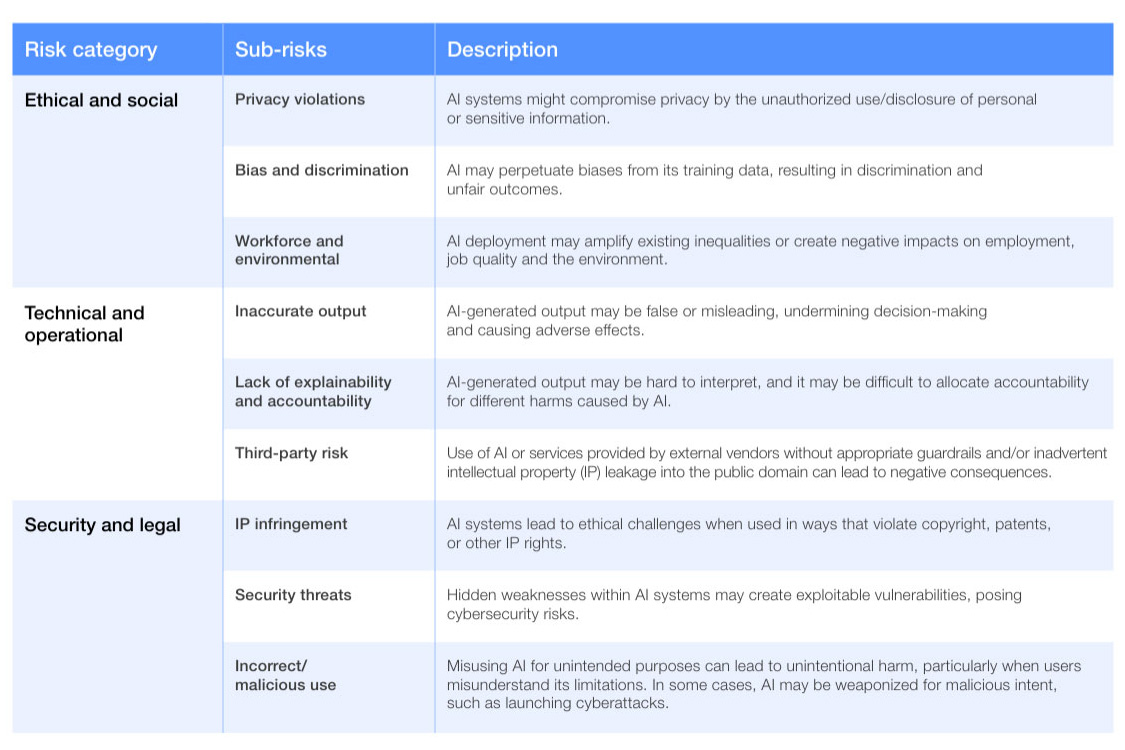

While framing differs across the referenced documents, they are incredibly complementary, and there is significant overlap. The World Economic Forum lays out the broadest categorization via three primary risk categories: ethical and social; technical and operational; and security and legal.

Each category then includes illustrative sub-risks and descriptions (see Figure 2), based on research from McKinsey & Co. The ILPA’s framework is concise and emphasizes alignment with traditional environmental, social and governance metrics. Reframe Venture provides the most technically detailed framework, addressing the full AI value chain.

Figure 2: World Economic Forum, Categories of AI Risks

Each framework also addresses (to varying degrees) long-term societal risks, including risks to the global financial system, supply chains, the labor markets and the broader environment. These risks are often referred to as system-level risks: aggregate, non-diversifiable risks that threaten the entire economy and long-term portfolio performance.

Majority Action’s 2025 report, “Emerging Technologies, Evolving Responsibilities: Why Investors Must Act to Mitigate AI’s System-Level Impacts,” critically framed AI risks not as isolated company-specific concerns, but as externalized, system-level risks that threaten the stability of the entire economy and long-term portfolio performance across all asset classes.

The report identified two essential roles for institutional investors in addressing these risks. First, one of self-defense: Institutional investors are universal owners that can diversify away from underperforming stocks, but will not be able to avoid the long-term performance risk to the whole portfolio if these risks are realized across the economy. Second, a role of last-defense: Given the current inadequacy of U.S. regulatory frameworks to manage AI-related risks, investors are becoming a last line of dense for ensuring AI development and deployment is done so responsibly. To protect long-term returns and prevent the realization of these risks, institutional investors must consider investment principles and governance standards that ensure responsible AI design, deployment and accountability across portfolio companies.

Responsibly Deploying Capital to AI

While risk mitigation remains essential, AI also presents significant opportunities for positive impact when designed and deployed responsibly. Reframe Venture’s framework for responsible AI identifies three strategic pillars of AI’s transformative potential:

- efficient resource allocation (optimizing time, labor and infrastructure across sectors);

- enhanced service delivery (expanding access to personalized, high-quality services at scale, particularly benefiting historically underserved populations); and

- cutting-edge research and innovation (accelerating scientific progress, including in drug discovery, climate modeling and energy forecasting).

Institutional investors have a unique opportunity to direct their capital across the AI stack toward not just AI applications, but assurance technologies and infrastructure, that benefit humanity. The investment case for AI is strengthened by projections of substantial economic value creation. Goldman Sachs estimated that widespread AI adoption could drive a 7% increase in annual global GDP over a 10-year period. This transformative potential is reflected in capital flows, as AI captured approximately 50% of all global venture funding in 2025, totaling $202.3 billion and representing a 75% increase from 2024.

With responsible implementation, AI’s adoption across industries can drive growth and innovation at scale. Institutional investors are uniquely positioned to address these risks through due diligence and engagement with companies, external managers and the broader ecosystem. The WEF framework offers concrete next steps for investors seeking structured approaches to evaluate AI risks and opportunities in their portfolios, ensuring both value creation and risk mitigation across their portfolios and the broader financial system.

In the next item in this series, we will dive into the importance of—and how to start—engaging with investment managers and consultants about AI strategies, exposures and risk management practices.

Nicole Torrico is the managing director of the Intentional Endowments Network, a peer learning network of more than 250 endowments, foundations and other institutions advancing rigorous, long-term investment strategies that address climate and social inequality as core fiduciary concerns.

This feature is to provide general information only, does not constitute legal or tax advice, and cannot be used or substituted for legal or tax advice. Any opinions of the author do not necessarily reflect the stance of CIO, ISS Stoxx or its affiliates.

|

Need for Tax Optimization, Liquidity Could Change ‘Endowment Model’ |

|

Large Endowments Hold Steady With Private Market Allocations |

Tags: Artificial Intelligence, Endowments, Foundations, Intentional Endowments Network, Portfolio Construction